Image by Luke Chesser on Unsplash

To not miss out on any new articles, consider subscribing.

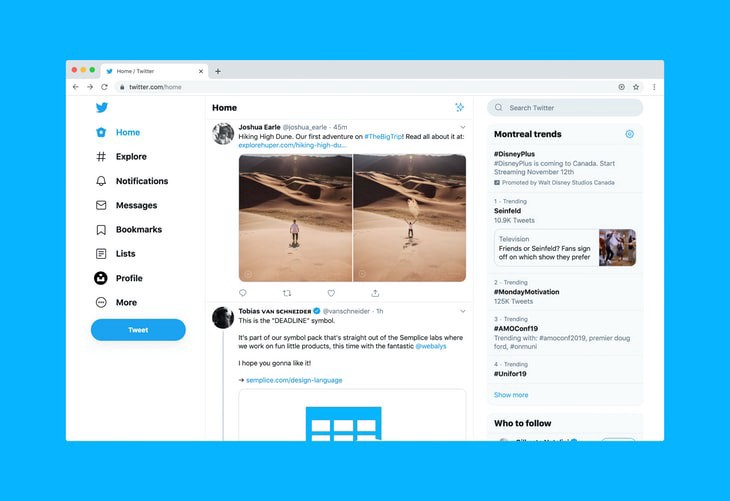

Using machine learning to classify tweets about real disasters or not

In the preceding article, I ran through the process of trying out different supervised machine learning algorithms to accurately predict whether a tweet is about a real disaster or not. The project addresses the problem of fake news using real disasters and the dataset used contains tweets and their corresponding labels (either real or fake denoted as 1 or 0). You can read Part 1 of this series here as this article is a continuation.

Introduction

In this article, the gears are switched from using BoW technique and training classifier models to Recurrent Neural Network with Keras. From the TensorFlow guide, Recurrent neural networks (RNN) are a class of neural networks that is powerful for modelling sequence data such as time series or natural language. Schematically, an RNN layer uses a for loop to iterate over the timesteps of a sequence, while maintaining an internal state that encodes information about the timesteps it has seen so far.¹

Neural networks, or sometimes called artificial neural network (ANN) or feedforward neural network, are computational networks which were vaguely inspired by the neural networks in the human brain. They consist of neurons (also called nodes) which are connected like in the graph below. You start by having a layer of input neurons where you feed in your feature vectors and the values are then “feeded” forward to a hidden layer. At each connection, you are feeding the value forward, while the value is multiplied by a weight and a bias is added to the value. This happens at every connection and at the end, you reach an output layer with one or more output nodes. You can have as many hidden layers as you wish. In fact, a neural network with more than one hidden layer is considered a deep neural network.² Neural networks are most applicable today in computer vision, voice recognition and natural language processing.

Data

The dataset is the same as the one used in the prior article. It was obtained from Kaggle and consists of training data and test data in two separate CSV files. There are 7,613 entries in the training dataset and 3,263 entries in the test dataset.

The training dataset contains [id, location, keyword, text and target] columns. The id column has a unique id for each tweet, location and keyword columns contain the location the tweet was sent from and a particular keyword in the tweet respectively; however, not every row has a value in these two columns. The text column contains the main text with URLs if there was a link in the tweet and the target column is for the label of each tweet. All columns are of string datatype apart from the target column which is an integer.

The test dataset contains the same columns except the target column.

Developing an RNN model

A collection of texts is called a corpus in NLP while a vocabulary is a list of all words found in a corpus; each word has an index. This enables you to create a vector for a sentence.

You cannot feed raw text directly into deep learning models. Text data must be encoded as numbers to be used as input or output for machine learning and deep learning models. The Tokenizer utility class vectorises a text corpus into a list of integers, which each integer representing a word in a document. num_words is an optional parameter which stipulates the maximum number of words to keep, based on word frequency; only the most common num_words-1 words will be kept.

The number of words for the tokenizer was set at 5000 and the tokenizer was fit on the training dataset. Then each tweet in the training and test dataset was converted to a sequence and vocabulary size was set.

from keras.preprocessing.text import Tokenizer

tokenizer = Tokenizer(num_words=5000) tokenizer.fit_on_texts(words_train)

X_train = tokenizer.texts_to_sequences(words_train) X_test = tokenizer.texts_to_sequences(words_test)

vocabulary_size = len(tokenizer.word_index) + 1

In order to feed this data into your RNN, all input documents must have the same length. This is done using pad_sequences( ), specifying padding to be ‘post’ and we set max_words to the maximum number of words per text in the corpus. What this does is that it adds trailing 0s to tweets that are shorter than the longest tweet in our dataset.

# Find the maximum number of words in a tweet

max_length = 0

for i,x in enumerate(words_train):

if len(words_train[i]) > max_length:

max_length = len(words_train[i])

print(max_length)

from keras.preprocessing.sequence import pad_sequences

# Set the maximum number of words per document (for both training and testing) by padding sequences X_train = pad_sequences(X_train, padding='post', maxlen=max_length) X_test = pad_sequences(X_test, padding='post', maxlen=max_length)

Next, we build our Keras model architecture. I experimented with different layers and parameters to get a good enough score. The input to the model is a sequence of words (technically, integer word IDs) and output is a binary label (0 or 1). 0 depicts a fake disaster tweet while 1 depicts a real disaster tweet. An embedding layer is the first hidden layer in a neural network. It takes in three arguments: input_dim, ouput_dim and input_length. input_dim is the vocabulary size which we derived above, output_dim is the size of the dense vector and input_length is the maximum length of documents in the corpus which we derived above too. There are different activation functions and you can play around with them depending on your data and desired output. Activation functions are used to introduce non-linearity to the network to capture complex patterns. sigmoid activation was used in the last layer because the output is binary. The Flatten method is used to reduce the dimensionality of the model before it gets to the Dense layer.

from keras.models import Sequential from keras import layers

embedding_dim = 50

model = Sequential()

model.add(layers.Embedding(input_dim=vocabulary_size,

output_dim=embedding_dim,

input_length=max_length))

model.add(layers.Flatten())

model.add(layers.Dense(10, activation='relu'))

model.add(layers.Dense(1, activation='sigmoid'))

model.compile(optimizer='adam',

loss='binary_crossentropy',

metrics=['accuracy'])

model.summary()

In Keras, you need to compile your model first by specifying the loss function and optimizer you want to use while training, as well as any evaluation metrics you’d like to measure, including at least one metric, ‘accuracy’. The loss function is an objective function used to evaluate the weights and try to minimize error in the model; the evaluation score calculated by the function is called loss error. The optimizer is the method/algorithm used to change the attributes of the neural network such as weights and learning rate in order to reduce the losses.

You can now fit your model on your train data and evaluate on both training and test data. If you edit or change any parameter in the fit method and want to rerun that line, you have to compile the model again to avoid proceeding with previously computed weights. Batch size refers to the number of training samples utilized in one iteration and epoch is the number of iterations or passes over the entire dataset. It is a measure of the number of times all of the training vectors are used once to update the weights in the neural network. Batch size and number of epochs are parameters you need to define while training and they determine the total training time.

X_valid, y_valid = X_train[:10:], y_train[:10] # first batch_size # samples X_train2, y_train2 = X_train[10:], y_train[10:]

# Fit and evaluate the model model.fit(X_train2, y_train2, epochs=50, verbose=False, validation_data=(X_valid, y_valid), batch_size=10)

loss, accuracy = model.evaluate(X_train, y_train, verbose=False)

print("Training Accuracy: {:.4f}".format(accuracy))

loss, accuracy = model.evaluate(X_test, y_test, verbose=False)

print("Testing Accuracy: {:.4f}".format(accuracy))

You can keep tuning the parameters to improve model performance or if you are satisfied with the final metrics, you can go ahead to save your model.

Conclusion

In this series, we experimented classifying tweets with supervised machine learning algorithms(Gaussian Naive Bayes, Logistic Regression and Gradient Boosting Algorithm), tuning their hyperparameters to improve their performance, developing an RNN with Keras and evaluating the models. We also noted that the accuracy of the model could be improved on the test data by tuning the different parameters. However, this could be resource-intensive, so it is highly dependent on the capacity of resources you have at your disposal.

For the full notebook for this article, check out my GitHub repo below: https://github.com/AniekanInyang/tweet-classification

References

[1] https://www.tensorflow.org/guide/keras/rnn

[2] https://realpython.com/python-keras-text-classification/

To not miss out on any new articles, consider subscribing.